Structural Equation-VAE: Disentangled Latent Representations for Tabular Data

Published in Preprint, 2025

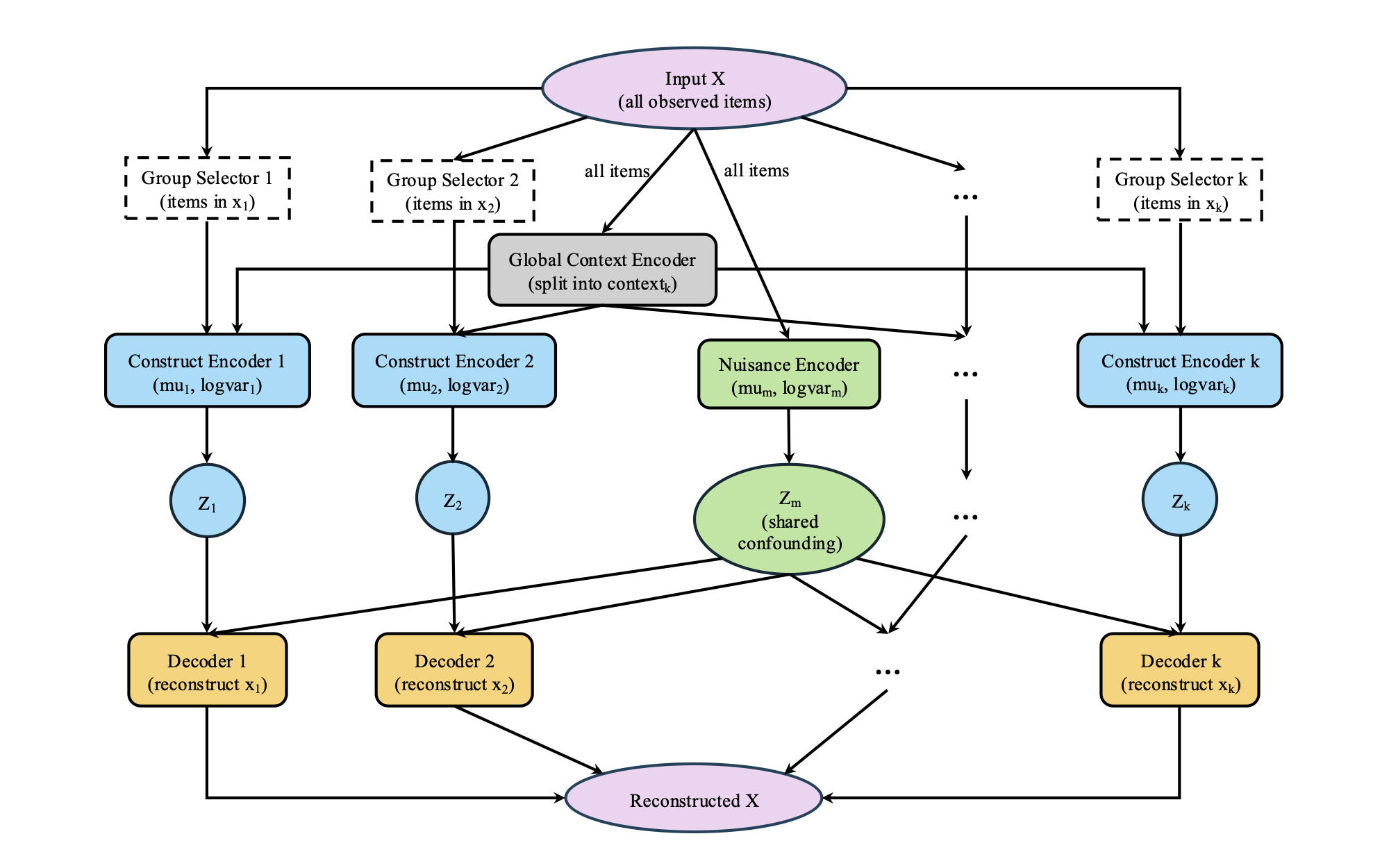

In our work on representation learning for structured data, we tackle the persistent challenge of aligning latent variables with theory-driven constructs. Drawing inspiration from structural equation modeling, we designed SE-VAE (Structural Equation-Variational Autoencoder) to embed measurement structure directly into a variational autoencoder, separating construct-specific signals from global nuisance variation. Evaluated on simulated tabular datasets, SE-VAE consistently recovered underlying factors more accurately and robustly than leading baselines, offering a principled framework for interpretable generative modeling in scientific and social research.

You can apply SE-VAE directly from PyPI:

pip install sevae

For full documentation and tutorials, visit the SE-VAE project page. The SSRN version is available here.

Citation as: Ruiyu ZHANG, Ce Zhao, Xin Zhao, Lin Nie, and Wai-Fung Lam. (2025). "Structural Equation-VAE: Disentangled Latent Representations for Tabular Data." Preprint. arXiv:2508.06347. https://arxiv.org/abs/2508.06347