Achieving Semantic Consistency: Contextualized Word Representations for Political Text Analysis

Published in Preprint, 2024

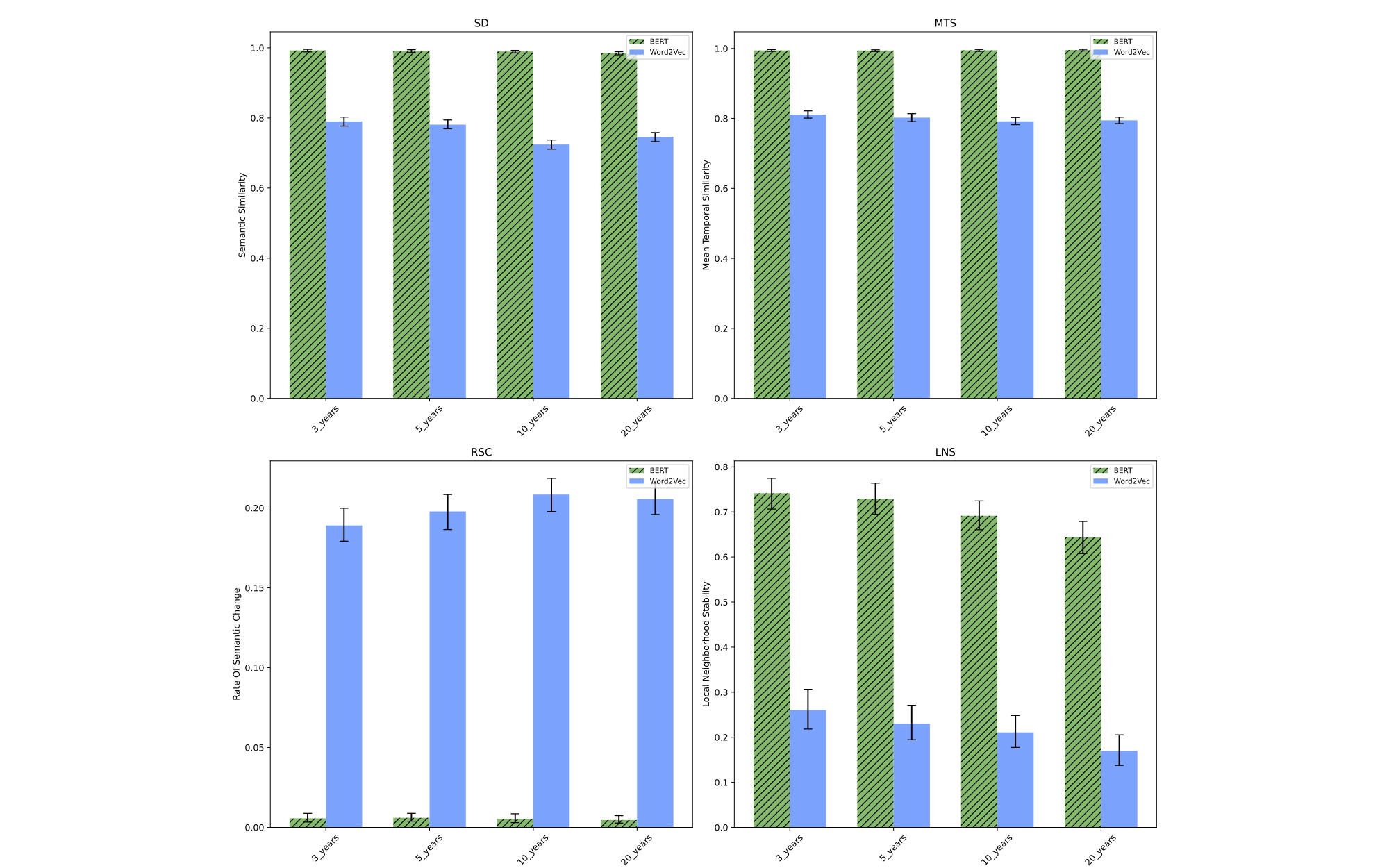

In our exploration of political text analysis, we set out to address the challenge of maintaining semantic stability over time. By comparing traditional static embeddings with contextual models using two decades of People Daily articles, our research evaluated how well each approach captures both enduring meanings and subtle semantic shifts. The results indicate that contextual models not only provide greater semantic consistency but also detect nuanced variations that static methods often miss.

The SSRN version is available here.

Citation as: Ruiyu ZHANG, Lin Nie, Ce Zhao, and Qingyang Chen. (2024). "Achieving Semantic Consistency: Contextualized Word Representations for Political Text Analysis." Preprint. arXiv:2412.04505. https://arxiv.org/abs/2412.04505